When & How to Use Google Translate

Picture 57.3: Google Translate should be used as a tool for translation the way a stick is used for drilling – when used cautiously and combined with other methods (such as dictionaries), it is a tool that can help you get satisfying results. Credit: Tool Usage by Valerie, (CC-by-nc-nd)

Based on the evaluations in Teach You Backwards, there are some situations for which Google Translate (GT) is an excellent tool, some for which it is helpful as part of a broader translation strategy, and many where it should be avoided or cannot be used.

The previous chapters of this study show that any given piece of any given translation in GT has one of three possibilities:

- Google Translate knows

- Google Translate guesses

- Google Translate makes stuff up

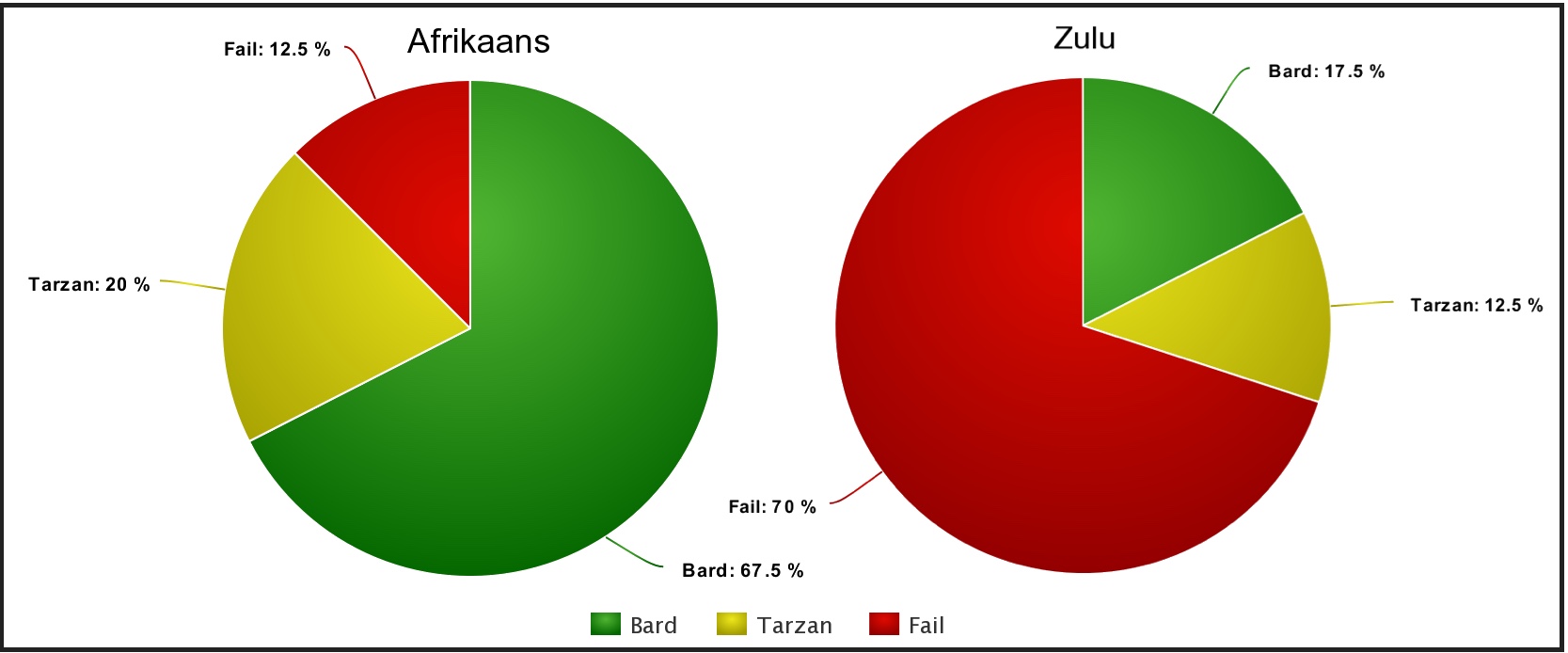

Your problem as a user of GT is that you have no way of knowing what mix of those three applies to your particular text. For their top tier languages, many of the translations will largely combine knowing and guessing, though making stuff up remains an active part of the algorithm. For the top 13 or so languages, you have a pretty good chance of getting results that convey much of the original intent, in certain translation scenarios, and the next 20-odd languages will transmit the broad sense of a text more often than not. For the base of the pyramid, though, about 71 languages, fake data factors in too highly for you to have any confidence in the results put forward. Figure 7 shows the proportion of invented results, among 2140 translations in 107 languages, while Figure 8 shows Afrikaans (GT’s best-performing language) versus Zulu, its fellow official South African language (about 3/4 to the bottom in overall ranking). The red in these graphs is your warning that GT translations should never be considered definitive, but can be helpful for advisory purposes vis-à-vis English.

Figure 8: Two languages that share the same geographic territory in South Africa, and are often spoken by the same people, have nearly inverse scores in GT. Afrikaans scored best among all 107 languages tested by TYB, yet still failed 1/8 of the time. You are strongly advised to inspect the scores for your language at http://kamu.si/gt-scores before you decide how much faith to put in GT output, keeping in mind that fake data may be part of any translation in any language, by algorithmic design.

To make use of the ongoing efforts the author directs to build precision dictionary and translation tools among myriad languages, and to play games that help grow the data for your language, please visit the KamusiGOLD (Global Online Living Dictionary) website and download the free and ad-free KamusiHere! mobile app for ios (http://kamu.si/ios-here) and Android (http://kamu.si/android-here). How much did you learn from Teach You Backwards? Your appreciation is appreciated!:

Situations When GT is a Good Tool1

Picture 58: Spanish ranks 5th in the Bard rankings and 4th in the Tarzan rankings. The GT rendering in English has several mistakes and peculiarities, but satisfactorily conveys the main points of the text – you could plan your visit to the local festival based on the translated information. Credit

Let’s accentuate the positive first. From several other languages to English, GT is often capable of producing understandable renditions of the author’s original intent. Users who want to get the main points of a news article or a fairly formal message will usually receive a passable translation, and sometimes an excellent one,2 from the better languages. However, two notes of caution are necessary. First, I had no resource for an objective test from 107 other languages toward English, so my confidence scores are based on the admittedly flawed premise of flipping performance from English out. Second, most users will have no way to verify whether the translation is correct, so they must take a leap of faith that is only marginally justified.

The best time to use Google Translate is when you yourself are the audience. If you plug in a text from another language, you are fully aware that the translation is machine generated, and you will read it with due caution. Conversely, the worst way to use GT is to send something to somebody else that you have had blindly translated into a language you cannot read, because they will not know that the words before them include guesses and fabrications. A comparison is cooking for yourself versus preparing food at a restaurant. When you scrounge for food at home, you can throw random ingredients together, and suffer through the result if it doesn’t work out. That same experimental plate would sit very badly on the tongue if served to a customer who expected that the chef knew how to cook.

In general, longer texts are better, because you will be able to mentally smooth out inevitable mistakes by understanding the broader context. One of our reviewers reported detailed success using this strategy for initial translations from Hebrew to English, for example, and a journalist from the New York Times discusses GT as his starting point for rough translations for the same pair [zotpressInText item=”{9SW2QR5C}”].3 Perhaps the best use of Google Translate to extract useful information was American researchers who found Russian-language articles in Ukraine, that helped them trace the criminal conspiracy behind the first impeachment of America’s 45th president.4 – the elegance of the words was unimportant, but the evidence they gleaned from the texts for their personal understanding gave them the leads they needed to pursue the story.

If you are able to read with a forgiving eye, GT is prescribed as generally beneficial and mostly harmless in the top 36 languages as listed in http://kamu.si/gt-scores, though with guaranteed fatal side effects in some percentage of translations. For the 71 lower-scoring languages, translations to and from English should be regarded with tremendous caution, even in non-sensitive situations.

Picture 58.1: When viewed as a whole, the big picture can look quite good in GT for larger documents in the languages that scored near the top of TYB tests, like the cheerful mural in this image from Renens, Switzerland. Closer inspection will reveal chips and cracks in the computer output, often losing the meaning of particular phrases and sometimes reversing the author’s intent – but you can usually appreciate such documents if you do not need to rely on the details. Compare this image to Picture 64, which hints at the big picture between English and most GT languages, and also speaks to languages at the top tier when the texts do not resemble Google’s formal training material. Photos by author.

From English toward other languages, I propose that our scores are indicative of reliability for each language. I recommend use of GT for informal purposes, such as a reader of a high-scoring language reading an article or email from English, with a healthy dose of skepticism. GT is also an excellent writing aide when you have an intermediate knowledge of the target language – that is, if you already have a sense of what the output should be, GT can confirm your intuition and provide correct spellings and accents, and sometimes correct agreements (though DeepL usually provides a wider range of options for the languages it covers, including alternative registers).

[If you can provide a good translation of that final recommendation to the affected languages, please send it along, so that search engines will present that information to speakers of the relevant languages.]

Students are encouraged to use GT for homework, but not in the way you might think. 6 Google will always make mistakes, and your teacher will always know, so you should never submit a raw GT translation if you want a good grade. (In one study, English teachers of Japanese students could identify machine translations about 3/4 of the time, with the remaining quarter uncertain whether the tell-tale goofs were more typical of a person or GT [zotpressInText item=”{YLULPBV3}”].) However, performing a test translation with GT and then tweaking it into shape can be a fantastic exercise for learning a language, engaging you in “higher order thinking” [zotpressInText item=”{KR5VQCX2}”] about nuances you might not otherwise confront. You can learn a lot, and enjoy the hunt, by using the machine output as a springboard for investigating how a native speaker would render an expression.

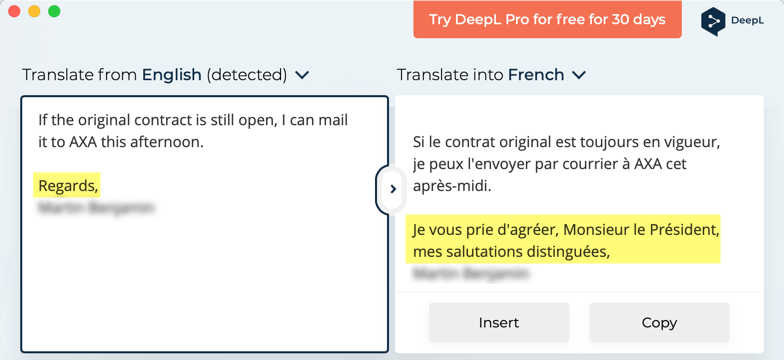

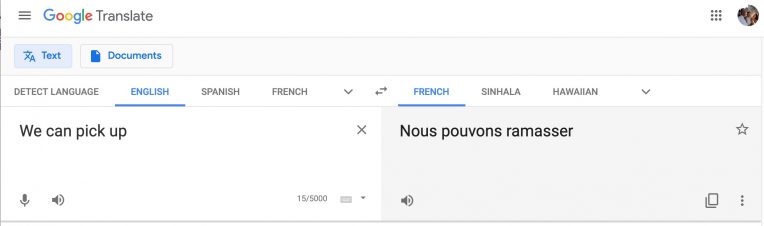

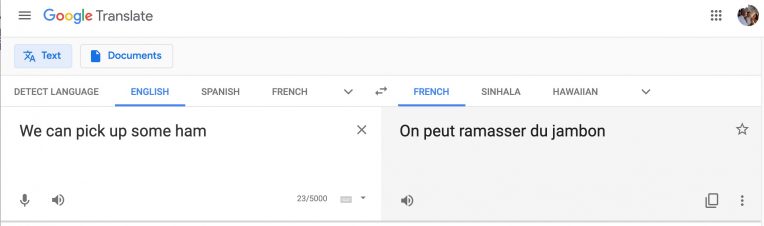

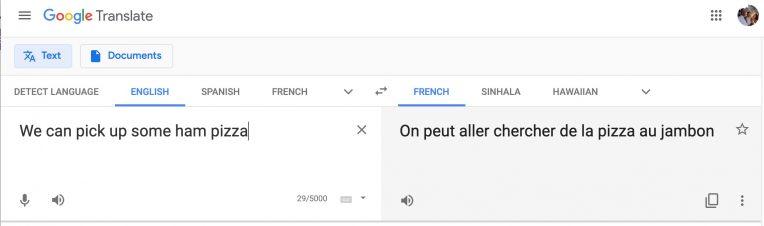

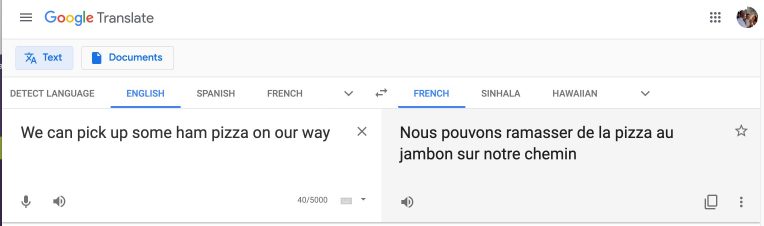

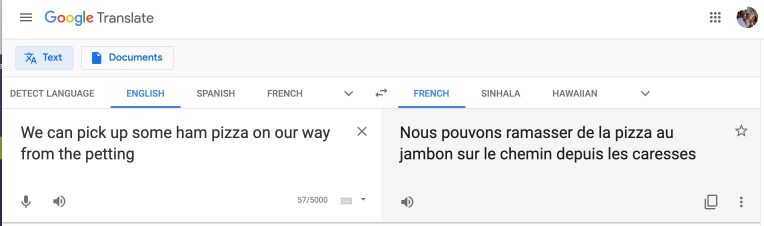

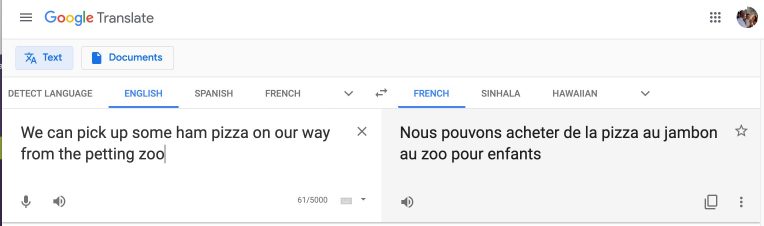

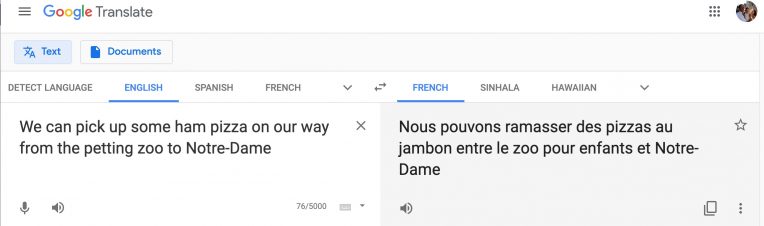

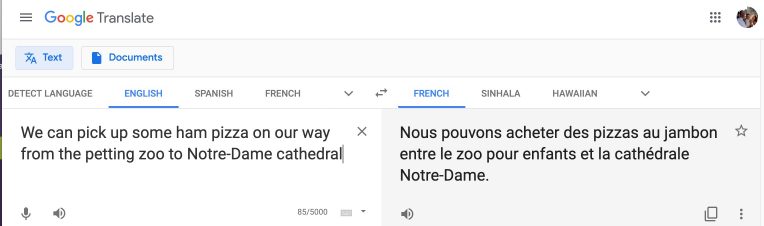

Examine the animated translation in Picture 58.1. You can see that GT’s translation of “pick up” to French shifts as the sentence grows. At some moments the proposal is “ramasser“, which is the word you would use to tell a child to pick up her Legos from the floor. Sometimes the translation shifts to “aller chercher”, which means “to go look for”, and is fine in the context of picking up pizza. I wanted to translate the longer sentence in Picture 35, and was surprised to see “acheter”, meaning “to buy”, as the proposal. So I asked my eight-year-old, who speaks native French, how she would say “We can pick up some pizza on our way home”, and, as a good translator should, she demanded clarification: “Do you mean that we’ll buy it?” Thus, I learned that “acheter” is a splendid word for this sense of “pick up”, and offer kudos to GT for picking that up. However, as you can see in Picture 58.1, had my sentence been one word shorter – removing either the “ham” from the pizza or the word “cathedral” – I would have been given the unacceptable “ramasser”, and were a student to hand in an assignment using “ramasser”, the teacher would not be pleased. The animation also offers other learning opportunities for students of French (e.g., do you express “some pizza” with “de la pizza” or “des pizzas”?) – the point being, fact-checking Google Translate can be a great way to grow your skills in a language – a detective game to teach you backwards.

Fact-checking GT is especially effective if you have a human chat partner trying to learn your language, so you can help each other arrive at native speech. (Pro tip: for the languages they serve, also try DeepL, which specializes in helping you fine-tune their output.) Remember to trust your own ear – if GT is showing you one result, but everything you have learned about the language tells you something else, your own instinct is more likely to be on target.

Picture 58.1: Time-lapse animation of GT shifting its output choices as a sentence grows. Various part of the translation are right or wrong at different moments.You can use GT for informal communications such as text chats, but be aware that the system is not trained on data to support casual conversation. For starters, most colloquial and other multiword expressions are missed entirely, down to ordinary goodbyes. Words are usually translated literally, rather than in the context of their meaning in combination. Importantly, this occurs despite the claims that AI and deep learning have already, or soon will, overcome such problems; they have not, they are not suited to do so with the data available for the best-resourced languages, and they will always fail even vis-à-vis English for languages with fewer resources. Simply put, GT cannot “learn” expressions such as the test phrase “like a bat out of hell” because there are no datasets where they occur in a way that can bridge languages. Nevertheless, such expressions constitute a large part of informal communications. This applies, secondly, not just to colorful idioms, but to single words in everyday speech. GT makes choices based on the formal contexts it trains on, so its heuristics will inherently miss ordinary meanings that fall outside its training material, such as “he was winded after running”. You absolutely cannot use GT to tell a conversation partner that you are falling asleep by saying, “I need to crash”. In terms of register, GT is often set to give formal second-person conjugations, such as the vous form in French, though some languages such as Spanish are set for the reverse; I have observed this, tested that it is usually impossible to change between formal and informal registers, but have not documented systematically which languages default to casual versus formal constructions. (You can test your own language with a phrase such as “Do you want to go for a walk?”) Similarly, GT usually defaults to masculine constructions, thereby botching first and third person expressions for 50% of its users; in one case, the translation of “I will be unavailable tomorrow” to Russian had the connotation of sexual availability when said by a woman. For these reasons, I recommend that GT be taken with quite a few pinches of salt when translating casual text; do not assume your correspondent will know what you mean when you send a GT translation of “pinch of salt”.

Use GT to check your spelling, with caution. For example, to check whether the French word “pamplemousse” is spelled with one “s” or two, you can type “grapefruit” in English on the source side and see the right spelling. This works pretty well if GT gives you the term you already know to expect. However, if you want to know whether the female cat, “la chatte”, has one “t” or two, you will get the masculine form, “le chat”, if you type “the cat”. You can force the right answer by typing “the female cat”, but you will be told “Le chat femelle” if you type “The female cat”, which, 🙈, more or less means “the female he-cat”, some sort of hybrid character from a few Broadway musicals. (Google has taken preliminary steps to address the gender issue in its high-investment languages, so simply typing “cat” now presents you with both the male and female forms in French, but the feature is in its infancy. Appreciate gender options when they appear, but certainly do not rely on them being factored into the results you see.) If the term you are seeking can have more than one form, due to gender, noun class, conjugation, level of formality, or some other variable, you must be clear in your own mind that you are looking at the form you need, because GT is only giving you one guess from a range of possibilities.

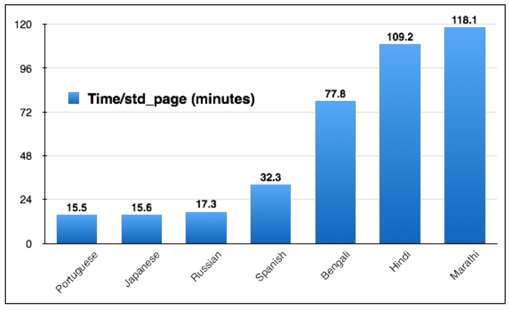

GT can be quite helpful as a starting point in translating formal documents such as business letters from English to top tier languages, but should never be given the last word. Figure 8 shows the results of a study that timed how long a person would need to post-edit the GT translation of a biology textbook [zotpressInText item=”{JI4GULVD}”]. Professional human translators generally report a pace of about one 250-word page per hour. By post-editing GT product, some languages are able to reduce the time to as low as 15 minutes; GT does the grunt work of suggesting the vocabulary and grammatical arrangement, saving time for the human in the percentage of cases where the tool gets it right. A professional translator from Hungarian to English estimates unscientifically, for example, that GT gives him a 70% to 80% head start – in keeping with Hungarian’s Tarzan score of 65. You can time yourself post-editing the English in Picture 58 to see how long it takes to polish 37 source words from a top-tier language to Bard caliber. However, this time savings is lost for languages at the lower tiers, where the output is so dubious that a person could spend more time correcting GT than translating from scratch: an average of 2 hours to post-edit GT in Marathi (though it is not known how long the same document would take from scratch, given that much of the editing time involved researching undocumented terminology, so would have been constant whether or not MT was attempted). Unfortunately, without a good reading knowledge of both languages, you cannot know whether GT output conveys the intended message; for example, Lewis-Kraus [zotpressInText item=”{MLXRFY49}” format=”(%d%)”] reprints a translation from Japanese (a mid-tier language) to English that captures all of the essential original information, whereas the best post-editing strategy for the translation from Japanese in Picture 1 would be to hit the delete key.

Cautionary Situations7

In emergencies, use GT when there is no recourse to a human translator or other tools. Be patient and assume you are Tarzan testing out words for talking to Jane. If you are on Skype trying to reunite a family in Romania and their baby in the USA who was kidnapped by a despotic president, do not hesitate to try the words GT generates. You are unlikely to make the situation worse, and you can see pretty quickly whether there is a semblance of understanding.

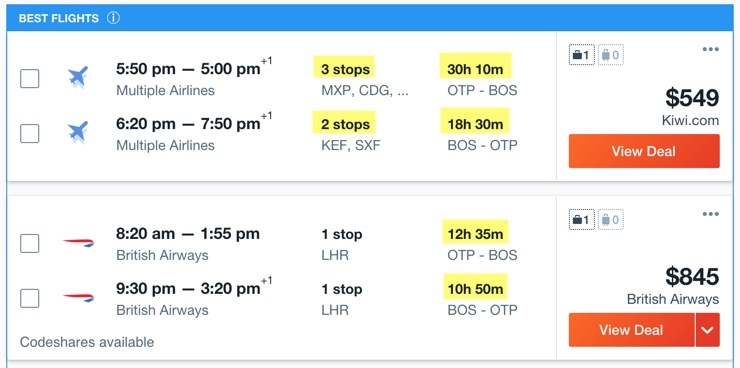

GT output should only be viewed as suggestions, in the same way as you inspect search results if you want to book a flight online. In fact, flight results are more trustworthy than translations, because you know that your options between Bucharest and Boston will at least get you somehow between the right cities. (I chose this pair for the alliteration, but a subsequent test, shown in Picture 59, shows how miserable you would be if you let a computer choose their prediction for best flights for this route.) With GT, a speaker of Haitian Creole who wanted to go to “Singapore” would be given the equivalent for “Senegal” in all other languages, which would deposit someone trying to get from Port-au-Prince to Singapore 17,746 km / 11,027 miles away from their destination, nearly half the circumference of the globe, if the mistake were made on a travel site.

My research proves that GT has a statistically high likelihood of making fundamental mistakes even for its best languages, but you can never know when. For example, when GT computes this sentence to French, they very wrongly produce “projet de loi”, a legislative proposal, as the equivalent for “bill”: “In fact, I had misplaced the bill and was anticipating your reminder.” However, when “In fact” is removed, the output is rendered correctly with “facture”, and the same happens if “anticipating” is replaced with “waiting for”. Regular GT users will see this phenomenon at play throughout the top tier – you might get a brilliant translation at one moment, or get something incomprehensible with a slight change in wording or punctuation. Some amount of catastrophically erroneous results are part and parcel of what GT shamelessly calls “near human” translation. Now that you know, if you are writing to a creditor about an unpaid bill, and you copy and paste the output from GT without inspecting it closely, and your creditor is completely baffled, shame on you.

Picture 59: “Best flights” on kayak.com from Bucharest to Boston. The recommended journey involves 7 flights with no meals or water on 3 no-frills airlines instead of 4 flights on one regular carrier, has no option to check luggage through and charges luggage fees on each separate airline, totals more than a full extra day of your life than the fastest routing, will easily set you back $100 per passenger for food during your layovers outside Milan, Paris, Reykjavik and Berlin, and leaves you to sleep on the floor of Charles de Gaulle airport.

The best way to use GT in situations where the translation matters8

1. Do not use it in isolation.9 Check your results against Bing and any other services that support the language you are translating versus English. If your language is among the twenty-four served by DeepL, use that as your starting point because it often offers a wide selection of alternatives (such as vocabulary, gender, or politeness). However, all of the online MT services face the same limitations. You should therefore also consult bilingual dictionaries such as WordReference.com, which shows human-curated translations for numerous senses of polysemous terms in many languages, and also includes many multiword expressions. Further, you should try your phrase in Linguee.com (see Picture 25), which highlights direct human translations in known parallel documents.

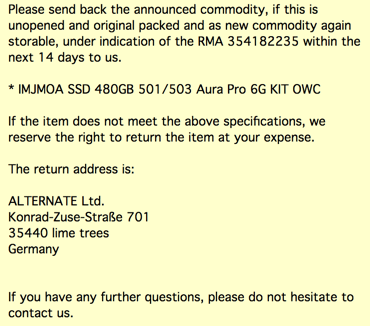

Picture 60: A real business email that was translated by machine from German to English. The first paragraph is awkward but readable. The address, however, transforms the city name from “Linden” to “lime trees”. Without review of MT output, an expensive computer part could have gotten lost in the post.

2. You must have some knowledge of the target language. 10 If you cannot sense whether GT output is within the zone of correctness, you must not use the translation in mission-critical situations. In important situations, blind translations will always – repeat: always, for every language – result in errors that make sections of your document incomprehensible at best, and sometimes the inverse of your intent. Picture 60 shows a case where one crucial error from German (ranked 2nd in my tests) to English could have resulted in the loss of a 200€ computer part.11 You can often improve the output with some back-and-forth tweaking, massaging your wording until you get the output you are looking for – but that means your knowledge of the target language must be substantial enough to recognize when GT’s results convey the meaning you intend.

3. If possible, have the results vetted12 by someone who knows both languages. Experience shows that humans will need to rewrite substantial elements of documents translated by GT, but at a significant time savings versus starting from scratch, for the top tier languages. Experience also shows that many companies do not choose to pay for polishing their translations, and their reliance on the output from GT is consequently unintelligible gibberish.

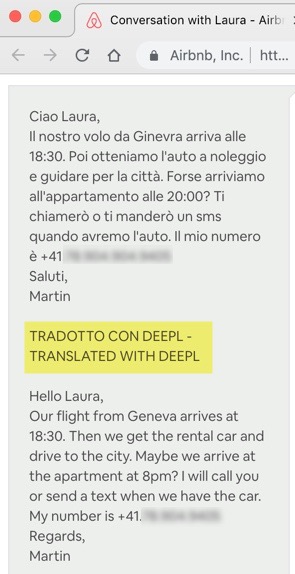

Picture 61: Message using MT that includes a disclaimer and the text in both the source and target languages.

4. Include a disclaimer.13 State up front something like: “This document was translated from English with the aid of Google Translate. Apologies for any errors.” I also recommend appending the original text, so a reader with some knowledge of the source language can try to work out the translation failures. Here is a perfectly implemented disclaimer on a professional sports website, which provides due caution to readers along with an assessment in keeping with TYB scores: “For those of us who do not speak Spanish, here’s the Google Translate (which tends to be pretty accurate with Spanish)”. Picture 61 shows the proper way to use machine translation in a situation where you do not have the knowledge to post-edit the automatic results.

5. Tweak your own words.14 You can say things in your natural speech in an infinite amount of possible ways – way more than a computer has a prayer of predicting. (An important TYB chapter looks in detail at the elements of language that are so confounding to MT. Read it!) Things that seem clear to you will be puzzles for translators, human or machine. As one example from a googol of possibilities, Google has an error message when its Chrome browser crashes, “Aw, Snap!”, that makes perfect sense in California but is mystifying in much of the world. They would have saved their localizers a lot of grief had they tweaked their original language to say, “An error occurred”, or “Something went wrong”, or “We could not load your page”. You can try to iron your original text so that it is smoother for the translation engine, using terms and methods of expression that might occur more often in the data on which GT is trained. Ditch colorful words like “ditch”, uncommon words like “nefariously” for which GT will make up stuff, party terms that form a special meaning together (especially if they are separated like “pick up“, since GT will not pick the fact that they go together up), terms with multiple meanings, and long and convoluted sentences such as the one you are reading. It is best to go back and forth between your text and the computer output, until what they are giving you comes close to your intuition about what you should be getting. For more, read these suggestions from Lionbridge, a major company in the translation industry, “Writing for Translation: 10 Translation Tips to Boost Content Quality“.

Seven situations in which you categorically cannot rely on GT15

1. Blind translations16 – that is, translations where you cannot read the results personally. You must assume that GT output will be flawed, at roughly the level measured in this study. If you are sending birthday greetings on Facebook to the speaker of another language who you met once on holiday, you can use the output but anticipate confusion. If, on the other hand, you are preparing a financial document to be notarized for the tax office, the output would put you at serious risk of disaster. If you do not believe that, read the GT terms of service:

We provide our Services using a commercially reasonable level of skill and care and we hope that you will enjoy using them. But there are certain things that we don’t promise about our Services. Other than as expressly set out in these terms or additional terms, neither Google nor its suppliers or distributors make any specific promises about the Services. For example, we don’t make any commitments about the content within the Services, the specific functions of the Services, or their reliability, availability, or ability to meet your needs. We provide the Services “as is”. … To the extent permitted by law, we exclude all warranties. … When permitted by law, Google, and Google’s suppliers and distributors, will not be responsible for lost profits, revenues, or data, financial losses or indirect, special, consequential, exemplary, or punitive damages. … The total liability of Google, and its suppliers and distributors, for any claims under these terms, including for any implied warranties, is limited to the amount you paid us to use the Services.

GT’s lawyers do not place an iota of trust in their output. Nor should you. And you and GT’s lawyers should especially pay attention to:

2. GT must not be used in medical situations.17 Language presents a major difficulty for hospitals and clinics around the world. Medical staff often do not speak the same language as their patients, whether because the doctor or nurse found work in a place far from home, or because the patient has immigrated from elsewhere. Moreover, medical training in places like India, Africa, and Latin America occurs in university languages like English or Spanish, not in local languages like Marathi, Sesotho, or Nahuatl (which is not a GT language, so the effort would probably be to translate to Spanish because Nahuatl is a language of Mexico, and hope the Nahuatl speaker can scrape by with that, which is not a great assumption, but I digress…). The temptation is to jump on GT and assume that the output will be “good enough”. It. Won’t. Every problem identified in this study is exacerbated in medicine because GT has no demonstrated training in the domain. There is no evident source of parallel texts for GT to learn terms pertaining to medicine between English and 107 other languages, and I will eat my hat if they have been manually curating such data. We saw in Picture 46 that GT does not even know what a delivery room is in German, much less how to talk a woman through delivering a baby in Khmer or Kurdish. Extrapolating to languages that score at the level of Chinese or lower, from research on emergency room discharge instructions for Chinese, Google translations risk serious harm in 8% to 100% of critical instructions for 91% of GT languages [zotpressInText item=”{5248838:759FWFCJ}”].

Google should place a prominent medical disclaimer along with all its output, as an ethical requirement if not a legal one. As stated in an article in the British Medical Journal that found 42.3% “wrong” medical translations from English to 26 languages, “Google Translate should not be used for taking consent for surgery, procedures, or research from patients or relatives unless all avenues to find a human translator have been exhausted, and the procedure is clinically urgent” [zotpressInText item=”{5248838:JZP4HQKT}”]. Unfortunately, medical service providers will try to cut corners by using GT instead of paying for professional translation. If you know of a medical situation in which Google Translate is being used instead of human translation, scream MALPRACTICE at the top of your lungs.

Don’t take my word for it, though. Take Google’s words about the Covid-19 pandemic:

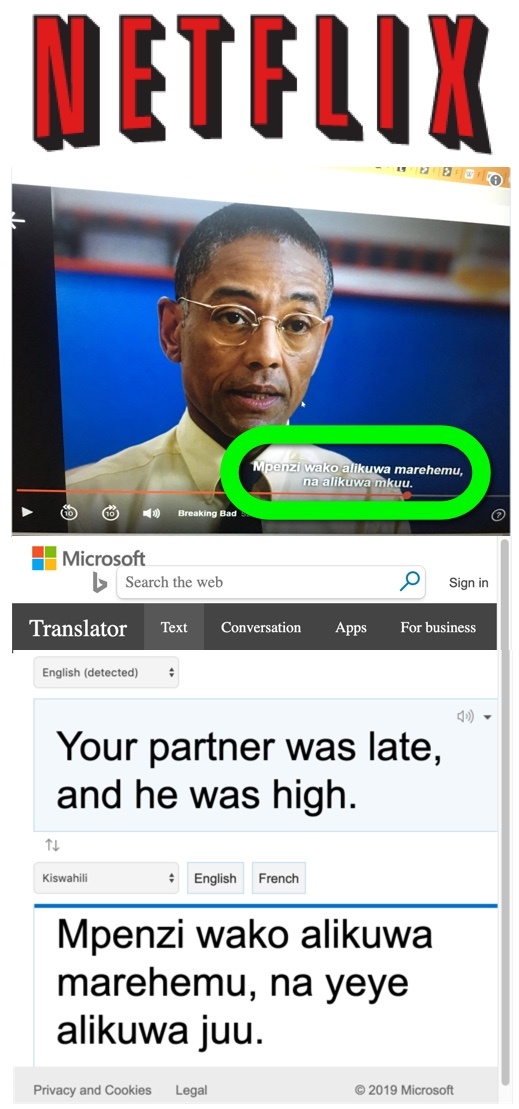

Picture 62: A Swahili subtitle on Netflix in Kenya, from Breaking Bad, and the result for that phrase on Bing. “Marehemu” is a corpse, which is a way of being “late” that no sentient person would use in the given context, but is proposed by both Google and Bing, while “mpenzi” (lover) is unique to Bing. I cannot definitively trace the sources for the Netflix subtitles, because I do not have enough examples at hand, and machine translations can vary from moment to moment, but there is not a shadow of a doubt that MT is involved. My hunch is that Netflix runs transcripts of their English subtitles through a translation engine and pastes the results into the subtitle track, perhaps with some human polishing for punctuation, capitalization, and the like. Photo of Breaking Bad from @_guchu

3. Do not use GT to produce any text for your business,18 unless you have the output thoroughly reviewed by a speaker of each target language. It is fine to use GT to look through incoming documents, where you yourself know that the text might not make sense, and you can use your natural intelligence to puzzle out bad grammar and research the nonsense parts. You must assume, however, that your clients will not know that the text they are looking at is a machine fantasia. For the bottom 2/3 of languages measured in this study, customers will not have the inclination to suffer through an article, web page, or email that looks like it was written by a drunk baboon. For the upper languages in which GT has invested more time and money, the problem is more pernicious – you will often get output that looks okay at first glance, but misses important details, chooses words that create mysteries, or even reverses your intent. People reading your documents will believe they should take your words literally. When they do, at best they will think you are a fool, and at worst your business relationship with them will fall to ruin. Businesses look ridiculous when they use GT. Chicago O’Hare Airport gives users a “language” option that uses the Translate API to produce pages for the Google languages; as you can test, for most languages, the translations could not guide you to the airport, much less get you through security and onto your plane. Localizing the website for an international airport, at least for the languages of the countries it connects to directly, is a great idea – but that is the sort of service that should be produced by paid human translators, or not at all. Customers who who use GT to try to figure out your documents or website know that they are getting an approximation. As blogger Darren Jansen says, their use of GT “doesn’t reflect on you,” but if you give them GT output that looks like you have produced it, “that is a translation that YOU are providing on YOUR website. YOU have to stand behind it, and it reflects poorly on YOUR brand.”

Similarly, as seen in Picture 62, Netflix has decided to serve its East African market by adding Swahili subtitles that appear to come from Google or Bing.19 The text has very little to do with the on-screen narrative, so it serves no purpose for the intended audience – and is clear from user discussion that they think Netflix has callously hired a calamitous translation agency. The subtitles have, however, earned Netflix a lot of derision in the local media.20 You should never consider using Google Translate with your money or your reputation at stake.

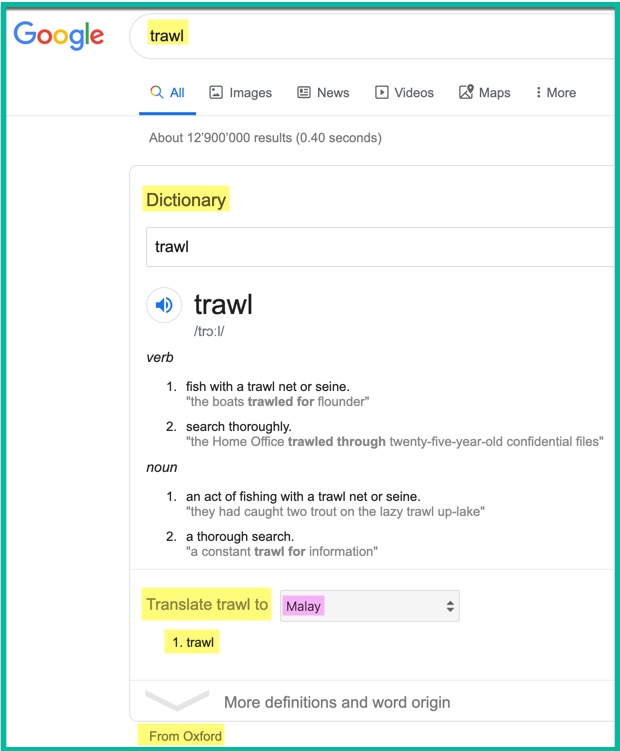

Picture 63: Google claims that it offers a “dictionary” within its English search results, including translations to all GT languages. In this example, the primary English noun sense of “trawl”, a large fishing net that is dragged through the water, is absent from view. For translation, the lei lie “trawl” is presented for a great many languages, and words with no sense information that might or might not be correct in certain contexts are given for high-investment languages like Spanish and French. Nevertheless, Google claims legitimacy for its results with the tag “From Oxford”, which is certainly false when it comes to the “translations” from Malay and all other languages. (Highlighting added to original screenshot.)

4. Do not use GT to translate individual words. Google is not a dictionary,21 even though it automatically assembles some language data in a form it calls a “dictionary”, as shown in Picture 63. As found by Gaspari and Somers [zotpressInText item=”{5248838:R8JPK5HE}” format=”(%d%)”], “using MT services available on the Internet as if they were bilingual dictionaries or lexical look-up facilities is a misguided strategy that is liable to provide users with partial and misleading information, in that MT software is designed to hide lexicographic information from the users and to provide one-to-one target language equivalents.” In addition, if Google does not have your input word in their source vocabulary, they will just make up a fictitious translation and never let you know, as you can see in their straight-faced delivery of translations for “ooga booga” from all of their languages, or their Icelandic to English translation of “barna samfella” (a “onesie” for babies – this cute one supports kamusi.org) as “children’s intercourse“.

Among the top 100 words in the English language, which make up more than 50% of all written English, the average word has more than 15 senses – giving you, on average, fifteen-to-one odds – that is, 6.25% – for a single word translation. GT makes a statistical guess to highlight one translation of one sense, and in some cases offers options that can help a knowledgeable user home in on a better result. However, unless you have a good knowledge of the target language and are only using GT to jog your memory or help with your spelling, you must not trust the result for single words or party terms. Most common words have at least two senses, and many have dozens, so your chance of getting the wrong translation ranges from 50% up to the high nineties, in the cases where Google is not just using MUSA for words that are outside its vocabulary. GT is not a mind reader, and the meaning you have in your mind will differ from the meaning Google picks more often than not.

Hey @Google There are other cities too in the world apart from Faridabad 😛#Translate #ScrewedUp #Google #GoogleTranslate pic.twitter.com/cG6bpFKrl8

— Write it Bold (@wibsocial) September 25, 2019

Don’t take my word for it, though! Here is a game you can play:

- Find a real human-compiled dictionary for your favorite language, and look up some words.

- Try the same words in GT.

- Give GT one point for every result it shares any sense with the genuine dictionary, or proposes something different but viable (e.g., GT says “rich” while the dictionary says “wealthy”). [In the results below, I don’t know how to score the last item, where “\t” inexplicably occurs, but let’s call it a point for Google.]

- Give your dictionary one point for every result that does not appear in GT, even if the GT result has some vague similarity (e.g., GT says “run” while the dictionary says “runny”, which are clearly not the same thing).

- Take away one point from GT whenever it produces a lei lie, an invented word that does not appear in the real dictionary, and is not related to the dictionary entry in any noticeable way (e.g., don’t count “run” as a lei lie in the previous example because it is in some way based on interpreting data, but do consider it a lei lie if GT merely reproduces the source word on the target side)

- Report your results in the comments section below!

Even if you don’t know Swahili, you can dominate the game by using the TUKI dictionary. Many lucrative languages are treated well at wordreference.com, dictionaries for dozens of European languages can be accessed at dictionaryportal.eu, and you can find a variety of other languages at lexilogos.com.

Let’s play for Irish. I cannot recognize any Irish words, so this is a blind test, using Ó Dónaill’s [zotpressInText item=”{T3KJ5SU7}” format=”(%d%)”] Irish-English Dictionary, Foclóir Gaeilge-Béarla. This dictionary represents the gold standard in Irish lexicography: it “has been the primary orthographical source for the spelling of the language since it was published and provides the most comprehensive coverage of the grammar and other aspects of words in Irish.” Ten words, completely at random, with an experiment you can replicate for any language, to test whether Google Translate is a dictionary. Ladies and gentlemen, start your engines:

| Irish Word | Foclóir English | Google English | Foclóir Score | Google Score |

| rumpach | narrow-rumped, lean | rump | 1 | |

| rúndaingean | strong-minded, resolute | confidence | 1 | |

| lacstar | idler, gadabout, playboy | lacstar | 1 | -1 |

| hapáil | hop | randomization | 1 | -1 |

| vaigín | waggon | wagon | 1 | |

| martaíocht | killing, provision of beef, scheming, ingenuity | bureaucracy | 1 | -1 |

| niciligh | nickel | nickel | 1 | |

| gadráilte | tied, strung | cached | 1 | -1 |

| jaingléir | straggler, vagrant, casual fisherman | juggler | 1 | -1 |

| daingnitheach | strengthening, stabilizing, ratifying | ratification. \t22 | 1 | |

| Totals | 7 | -2 |

Results: With 10 random words, Google matched the gold standard 3 times, came somewhere within the vector space twice, and invented translations out of whole cloth 5 times. Tell all your friends: Google is not a dictionary.

5. Do not use GT for any non-English language pairs23 (except Catalan to Spanish, Czech-Slovak, and Japanese-Korean, which are the only production pairs known to be based on direct training; if Google tells about other direct pairs, I will update the list). In all other known cases, as demonstrated in repeated tests that you can easily try yourself, the translation passes via English. French to German goes via English. Swedish to Danish goes via English. Hindi to Urdu goes via English. This means that all errors from Language A toward English are retained, and an additional layer of errors is introduced from English to Language B at about the level measured in the empirical study. The rates calculated for all 5151 pairs that do not include KOTTU are indicative, though not definitive because I could not test translation quality from each language toward English. Just over 1% of pairs were estimated to achieve an understandable result more than half the time. Put another way, in 99% of Language A to Language B scenarios that are possible in GT, the chance of understandable output is lower than a coin flip. In three quarters of cases, 3861 pairs, intelligibility is below 25%, and nearly three out of ten cases (1474) will be on target for MTyJ (Me Tarzan you Jane) approximations 10% or less of the time. With numbers like these, GT is essentially useless as a translation tool where English is neither the source nor the target. The Norwegian Olympics team discovered this when their chefs ordered 1,500 eggs in Korea using GT from Norwegian to Korean, and a truck delivered 15,000 instead. You may be able to get the gist of an article, but not in any situation where understandable output actually matters.

Picture 64: The big picture becomes harder and harder to see with GT translations when (a) the text strays from the type of formal writing that GT is trained on, or (b) the translation language is among the majority that TYB evaluators scored poorly. This image was edited in Fotor Photo Editor from the original Picture 58.1.

6. Do not use GT to translate poetry, literature, or humor.24 Poetry, or any other artful literature, uses figurative language where it is essential to understand meanings deeper than the words on the surface. GT cannot divine the sentiments in a speaker’s heart. You would expect that artistic features such as rhyme and meter will be lost, but so will any sense of the poet’s message. A line-by-line comparison between GT output and a human translation of a poem from Catalan to English provides a typical case in point. The opening line of the Analects of Confucius, one of history’s most well-known literary works, is 學而時習之、不亦說乎 in the original Chinese, translated to English by a real person as, “Isn’t it a pleasure to study and practice what you have learned?” Use GT, though, and you will learn that the quote means “Learn while learning”. As my daughter likes to say, “Yeah, but no”.

For a fuller look at GT and literature, I urge you to detour to Douglas Hofstadter’s essay “The Soggy Soup Maid“, which he graciously entrusted TYB to reproduce on this site.

The same warning can also be applied to translating humor, especially jokes that depend on twists in the original language. However, the mistakes that GT injects can be so funny in their own right that you will often end up with a collection of words that make you laugh, even though the original pun is certain to be missed. GT translations of jokes will be utterly confusing to anyone reading them blind in the target language.

7. GT cannot be used for any of the roughly 6900 languages that are not in the system.25 As obvious as this may sound, GT is often touted as a “universal translator”. In fact, 98.48% of ISO-identified languages are not covered, and 99.9996% of potential translation pairings are missed. For three continents, not a single indigenous language is covered: North America, South America, and Australia. This includes languages with millions of speakers, such as Quechua, Aymara,26 Nahuatl, and Guarani. Of the word’s top 100 languages, 34 are excluded, spoken cumulatively by about three quarters of a billion people, or ten percent of the human species. Of course, Google has no legal or moral mandate to cover any language, and the 1.5% they do cover, if the most generous possible numbers are used regarding speakers and literacy, nominally serve five billion people vis-à-vis English. I am not arguing that the service falls short of its own coverage goals. I do insist, however, that GT falls far short of the media coverage it winkingly accepts, that has convinced the public they provide universal translation. To the extent that they accept that mantel, or do not take steps to refute it, they are complicit in a deception. The consequence of this deception is that almost no research funds are available to bring untreated languages into the the technological realm, because it is so widely believed that Google already does it.

Final takeaway27

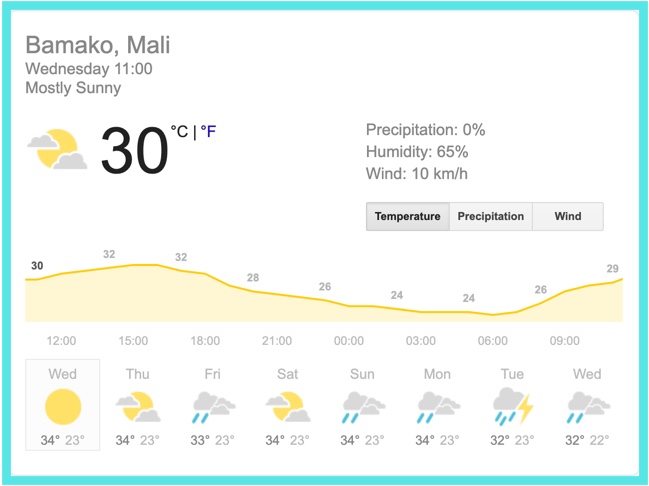

The evaluation scores in Teach You Backwards provide a broad indication of how much confidence you should have for a given language. You are also urged to run your own tests to see how trustworthy you find GT for the languages you know. For example, based on personal experience, my recommendation to use GT as a writing aide applies to French, with a Tarzan rating of 60, but not to Swahili, with a rating of 25. Your mileage may vary.28 Even in the best languages, though, you should always be prepared that some part of your translation might be gibberish, or the exact opposite of what was intended. As much as you may refer to weather forecasts to give you guidance, you know well enough not to time activities in Bamako for next Tuesday based on a computer model that suggests rain that afternoon – though you could surely use the projection in Picture 65 to guide you against wearing a ski jacket. Similarly with Google Translate: depending on the situation, what you get could range from a few right words to a masterful rendering of the original text. If you know the source and target language well enough and nothing in the output strikes you as crazy – in the weather analogy, if you can step outside, check the thermometer, feel the wind, and look at the clouds to get a sense of whether it will be nice to walk in an hour, which you can merge with what the computer is telling you based on information slightly beyond your horizon – then take the bet that the result is an adequate approximation of the original text. If you don’t have such information, especially for languages beneath the top tier, then all bets are off.

In the end, Google Translate is a set of suggestions based on models that may or may not be accurate, and data that is certainly incomplete in all languages. Treat the output as suggestions, not facts. Do as much extra checking as you can, preferably with human-compiled dictionaries or, best, speakers of the target language. Be prepared for misunderstanding, and expect that sometimes your results will be hilarious Giggle Translate. Do not rely on the translation when the output is important – not if your course grade depends on it, and absolutely not in any medical diagnosis or treatment situation. And then, with this awareness squarely in mind, go ahead and use GT to help you communicate with people you otherwise couldn’t. GT is not usually going to make you Shakespeare, but if it can help you speak like Tarzan, you’ll make it through the jungle okay.

Is DeepL Better than Google Translate? A No, A Punt, and A Yes29

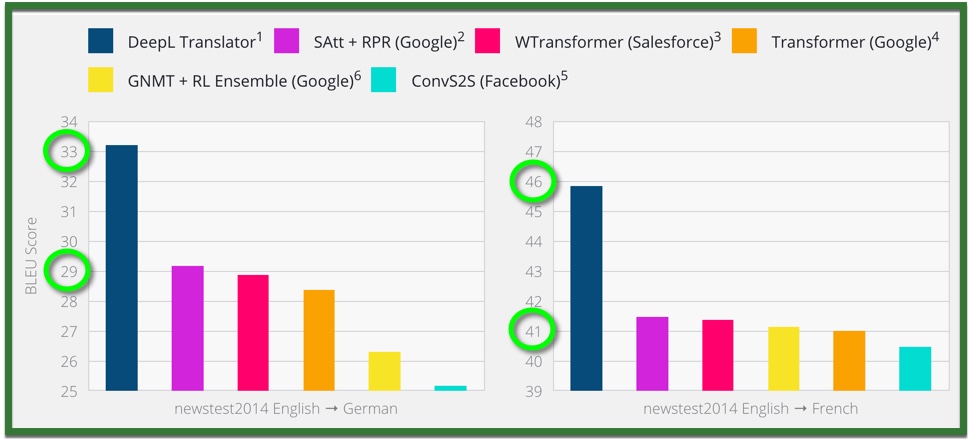

Picture 65.1: DeepL uses visual trickery in the way they report the results of studies to make themselves look head and shoulders above the competition. The graphs make a 4.5% difference with GT look more like 200%, and an 8% difference with Facebook look like Facebook barely scored. These images are deep lies that would be rejected by any scientific journal. https://www.deepl.com/press.html

Many people suggest that DeepL produces better results than GT – in the words of one reviewer, “Folks outside of Google, who like to drink the AI-hype-honey, will say: yeah but DeepL will fix all of that”. DeepL itself states, “our neural networks have developed a previously unseen sense of ‘understanding’” (http://www.deepl.com/blog.html), and does not shy from reproducing journalists’ assertions of its superiority at the bottom of their main page. There is no scientific basis for this belief. DeepL claims to have tested 100 sentences in 3 languages. Those are potentially interesting results, but fail as legitimate science: “Specific details of our network architecture will not be published at this time.” By withholding the research from inspection, their truth claims cannot be supported. Maybe we should just trust them, but look at the graphs in Picture 65.1, reporting the BLEU scores. Those are DeepLy deceptive visual sleight-of-hand, making one 4 point difference look like a 50% variation, and a second 4 point difference look like it is almost 3 times better – view “How to Spot a Misleading Graph” on TED-Ed for an animation of the trickery at play. Moreover, they cherry-pick which results they choose to share, giving no indication about whether their findings are transmissible to the other languages in their system. Without publishing their findings in a way that can survive peer review, I’m afraid their graphs can only be read as deceptive advertising copy.

Deutsche Welle news service takes the bait anyway, declaring DeepL “three times more powerful than Google Translate” in “regular blind tests”. I am unaware of any rigorous measurements of translation quality between the two services. However, as a late learner of French, I consult both in the course of my personal life in French-speaking Switzerland and my professional life working with people throughout the Francophone world. While writing Teach You Backwards, I have paid close attention to the behaviors of both GT and DeepL. Here I offer my observations, which are not systematic and do not necessarily apply beyond French (though I have cause to suspect they do).

We can compare DeepL and GT in three ways:

- Are the first-pass translations better in DeepL than in GT for the same language? I say no. Sometimes they both do a good job, sometimes they both mess up (often making the same mistakes), and it can fall either way when one is good and one is bad. Testing in 2022, for example, both came up with an excellent equivalent in French (donnez à fond) for the English “go all out” when asked to translate a sentence from a real English conversation, they fell on either side of the coin flip while giving a definitive-seeming answer when choosing between formal and informal registers (vous versus tu), and they both failed entirely with a common phrase that includes five simple words with the specific meaning of “honest” when they appear together, “on the up and up”. Both make up stuff as part of their algorithm when their data falls short. Both (TYB testing shows, and you can easily use our method to confirm yourself for the languages of your choice) pivot through English for most language pairs where English is neither the source nor the target, which means that erroneous translations from Language A to English are then chiseled in stone and compounded with errors from English to Language B – with cascading error rates similar to the debilitating numbers for polysemy discussed in the chapter on translation mathematics.

- Are good first-pass translations a better percentage of the output from DeepL than from GT? You could spin the facts in this direction, because DeepL works with many fewer languages. By limiting themselves to 24 languages with some of the best data resources (English plus Chinese and 22 official European languages that share a large parallel corpus of professionally translated documents), they don’t enter into the quagmires of languages about which they know a lot less. GT is likely to generate a high percentage of malarkey in most languages outside its top tier. DeepL plays it safe and does not even venture toward those waters. GT fails badly in languages like Uzbek and Cebuano. DeepL does not try. Not trying where you know you cannot succeed might be a cop-out, or it might be the more honest strategy. What say you?

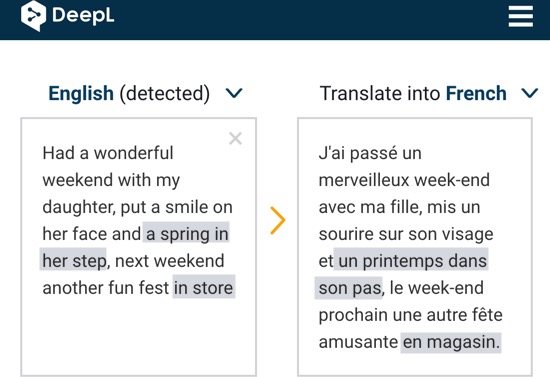

- Can a user with some knowledge of the target language get better results from DeepL than from GT? This is a yes, because DeepL offers alternatives for post-editing on the target side that let humans use what they know to zero in on a translation that matches the intent of the source text.

DeepL does not cite any formal studies to back up their claims of superiority. Larousserie and Leloup [zotpressInText item=”{5248838:EFDK3QGZ}” format=”(%d%)”] compare translations of five texts from English to French using DeepL, GT, Bing, Baidu, and Yandex, and preferred DeepL (without metrics or methods), while giving no justification for the claim in their subhead that the service performs three times better than GT for French, not to mention the languages it did not look at versus English or as non-English pairs. Statements such as, “DeepL translates your documents at the click of a button, to the world-class standard you’ve come to expect from us” should be read as marketing, not science. IMHO, this DeepL translation is a “world class” mudslide, with snake eyes for polysemy and full-on MUSA for the letter “f”: “Maybe tomorrow, he proposed to his f” → “Peut-être que demain, il a fait sa demande en mariage à son père” (which back-translates as something like, “Maybe that tomorrow, he will demand in marriage to his father” – I’m not sure whether his father is supposed to be getting engaged to him or approving his engagement). And, while DeepL luckily picked right for its first guess for “Do you sell hamsters?”, its runner-up translations proposed that I might want to buy ham, lobster, fish hooks, a hamlet, hamburgers, beans, herrings, a Turkish bath, lettuce, a harness, marmottes, a hammer, a handle, a cooking pot, overalls, salami, germs, or a few other things I could not find in a dictionary.

I use both services frequently, along with Bing, for help drafting letters in French. I have found that all three make similar errors, for example, as seen in Picture 66, mistranslating the tweet botched by GT in Picture 55 almost identically. However, speaking as a consumer, I prefer DeepL for the composition task because it usually offers a wider range of options for refining output on the target side, thereby improving the final result through the interaction of the computer and a somewhat knowledgeable human. Both DeepL and GT might, for example, deliver output where the first sentence uses the formal “vous” and the next sentence switches to the familiar “tu”, but only DeepL is likely to offer you viable alternative options for both sentences in the register you prefer.

This message, to plan an outing with my daughter’s best friend, fried the CPUs of both DeepL and GT, because neither could handle how to formulate verb constructions that worked in both the first clause and the second: “Can Mina, or you and Mina, join me and Nicole…”. Using the DeepL post-editing tools on the French side, however, made it possible for me to arrive at a phrasing that made it clear the invitation was either for the child alone or a parental +1: “Est-ce que Mina peut, ou toi et Mina pouvez vous, joindre à Nicole et moi…”. GT offers a maximum of one alternative per sentence, while DeepL allows you numerous entry points to tweak wherever you see a translation run awry.

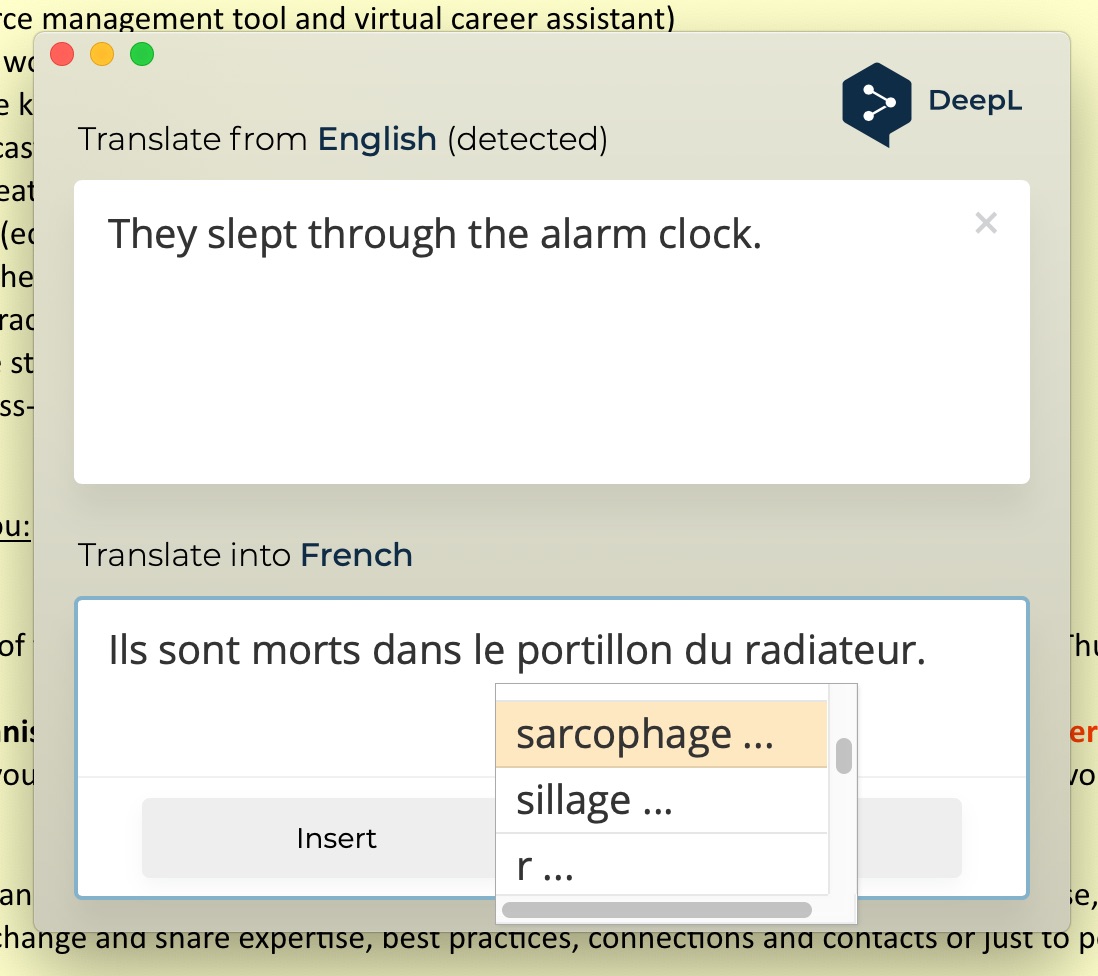

My daughter deploys her native French to tell me that “alarm clock” translates to “réveil”. It happens that GT gets this right, with a 🛡certification. DeepL proposes “réveil-matin”, which is not wrong, but sounds, in the words of a native-French adult, like “what my grandmother would call it”. DeepL’s first alternative is “réveil réveil”, which back-translates as “alarm clock alarm clock”, and additional alternatives get worse; DeepL as a dictionary never offers the option that real people really say, “réveil”. The sentence “They slept through the alarm clock” is destroyed by both GT and DeepL, giving options for “through” that indicate “during” or “within” or “by means of”. DeepL even suggests “Ils sont morts dans le portillon du radiateur” might work (Picture 67) – which back-translates as “They died inside the radiator gate”. My kid produced “Elles ont raté le réveil” (with correct gender), which uses natural intelligence to locate the inner meaning, “They missed the alarm clock” – and she had a huge laugh about the bollocks from DeepL and Google. More than unfortunate, the reason I needed to know this is a funny story involving panicked calls from my daughter’s school, ’bout which ’nuff said. Curiously, DeepL did propose “réveil” by itself as the first option in its full sentence translation, but it also gave “réveil” as choice number four.

The advantages of the post-editing feature would vanish were I stupidly to translate a text into a language I could not speak at all, such as Polish or Russian, In such a case I would always inform the recipient that the document had been automatically translated, and attach the original English, without assuming that one or the other service gave a more comprehensible draft. I could not look at Russian and know if a Cyrillic equivalent of “réveil” is being offered, or if “Child Discovery Center” has the equivalent of this obviously awful option from DeepL: “Centre de découvertes pédophiles”. Can you?

As an example of how post-editing on DeepL can work well with a language you somewhat know, for an important formal communication with a government ministry in francophone Africa regarding a workshop they’d asked me to lead for developing three of their national languages, I needed to ask, “What progress are you able to report?”. Google’s first offer was “Quels progrès pouvez-vous signaler?”, which would be adequate but not fantastic, and the service also offered another stilted option. DeepL also began with a very direct translation that did not land at the right tone: “Quels sont les progrès que vous pouvez signaler ?”. Clicking on “Quels” gave me 59 (!) opening options to scroll through. After deciding that “Pouvez-vous…” sounded best to my ears, I was then able to jump to the middle of the sentence and choose among 28 new options, deciding upon “Pouvez-vous nous communiquer les progrès réalisés ?”, which struck the balance of respect and insistence that the situation required.

Picture 67: The DeepL computer app provides numerous alternatives to refine the translations they offer. In this example, the tool made it possible to produce a plethora of bad translations – the construction shown, which back-translates as “They died inside the radiator gate”, could be made worse with “sarcophagus” or “wake” (trail of a moving boat) as offered. None of the translations produced by the DeepL tool came close to conveying the original meaning. The BLEU score for their first translation is 15.11, and the BLEU score for the words DeepL assembled in the image is 3.28.

If you do not have the skills in the target language to take advantage of such refinement, then the results from GT and DeepL are a tossup. However, the ability to tweak your results if you have some knowledge of the target language is a unique selling point for DeepL in the short stack of languages they serve.

One final factor that now tips the equation to DeepL, but has nothing to do with its underlying translations (you’ll get “réveil” on both the web and the app): the company has introduced a free tool for PCs and Mac that launches a translation box if you simply highlight some text and press Ctrl+C twice in any other application. You can use their post-editing features to tweak away on the translation on the target side, then press “Insert” to pop your output back into its original location, for example into a WhatsApp message. The software is simple and well-designed, working on the target side in more or less the way Kamusi’s source-side SlowBrew system will function when it is complete, and has become an integral stage in my French communications since it was launched. But, whether you use DeepL on the web or the app, its neural network still conjures up hallucinations such as the honest-to-God translation to French shown in Picture 68 for an email sign-off you use every day, “regards”: “Je vous prie d’agréer, Monsieur le Président, mes salutations distinguées”.

Picture 68: DeepL translation of “Regards” from English to French for a business email. The reverse translation could be, “I hope you will accept, Mr. President, my distinguished greetings.” Without the “Mr. President”, the offering would be an appropriate way to end a formal letter, though somewhat over the top for a work-a-day email.

References

Items cited on this page are listed below. You can also view a complete list of references cited in Teach You Backwards, compiled using Zotero, on the Bibliography, Acronyms, and Technical Terms page. [zotpressInTextBib style=”apa” sortby=”author” order=”asc”]

To notify TYB of a spelling, grammatical, or factual error, or something else that needs fixing, please select the problematic text and press Ctrl+Enter.

![]()

Footnotes

- link here: http://kamu.si/good-tool

- For example, this French article has a few subtle syntax flaws when translated to English, but nails the essence. The one striking oddity versus a human translation is that French has two terms that mean the same thing, so GT translates both, whereas a person would see and delete the part that is irrelevant outside the French context: “tung oil … it is also called tung oil”

- “I depend way too heavily on Google Translate, both for work and in my personal life. For accurate translations I rely on an excellent support staff, but in scanning social media I routinely turn to automated translations — far more than I’d like. My wife and I feel like captives of Google Translate in making sense of Hebrew-language messages on local WhatsApp groups, bank statements, utility bills and so on, but it is adequate, and it continues to improve.”

- Ilya Marritz, ProPublica 2020: How Parnas and Fruman’s Dodgy Donation Was Uncovered by Two People Using Google Translate

- link here: http://kamu.si/systran

- link here: http://kamu.si/learning-aid

- link here: http://kamu.si/cautionary-situations

- link here: http://kamu.si/when-it-matters

- link here: http://kamu.si/not-in-isolation

- link here: http://kamu.si/some-knowledge

- Although the email clearly used MT, there is no information about whether the translator was GT or a competitor.

- link here: http://kamu.si/vet-results

- link here: http://kamu.si/include-disclaimer

- link here: http://kamu.si/tweak-your-words

- link here: http://kamu.si/seven-situations

- link here: http://kamu.si/blind-translations

- link here: http://kamu.si/not-for-medicine

- link here: http://kamu.si/not-for-business

- Netflix turned off their automatic subtitle in 2021, after two years of public outcry.

- One hilarious Twitter comment from a Kenyan, in regards to the notoriously rudimentary Swahili of the majority Luganda speakers in their neighboring country: “Did Uganda do this?”

- link here: http://kamu.si/not-a-dictionary

- The sequence “\t” appears in the GT result.

- link here: http://kamu.si/avoid-non-english-pairs

- link here: http://kamu.si/poetry-humor

- link here: http://kamu.si/not-in

- The quote from Nelson Mandela that inspired the title for this web-book, along with 16 others, can be found in Aymara on Global Voices.

- link here: http://kamu.si/final-takeaway

- Scientific Community Baffled By Man Whose Waist 32 With Some Pants, 33 With Others

- link here: http://kamu.si/deepl

Incredible job gathering all this information and thanks for all the insightful comments!

Keep the good work!